Natalia Díaz Rodríguez

Assistant Professor (Enseignant-Chercheur)

ENSTA ParisTech (Computer Science and Systems Engineering -U2IS)

Autonomous Systems and Robotics

INRIA Flowers Team

Biography

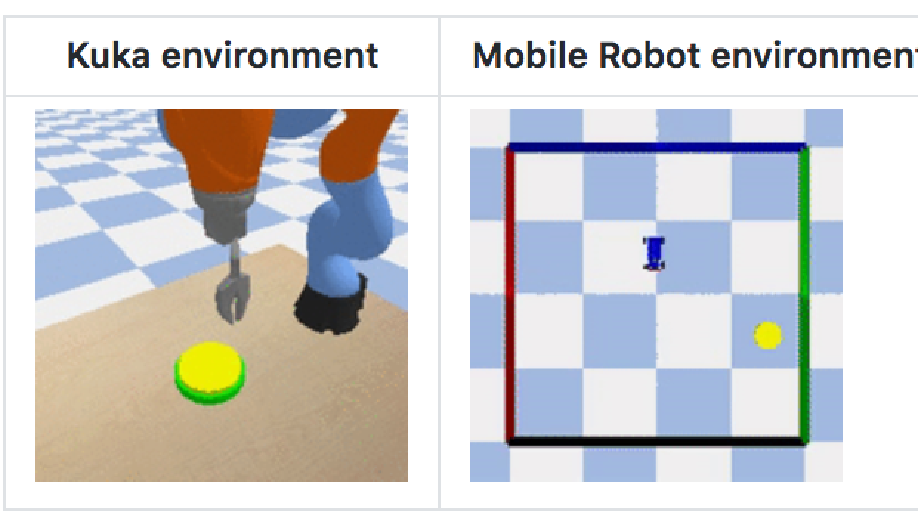

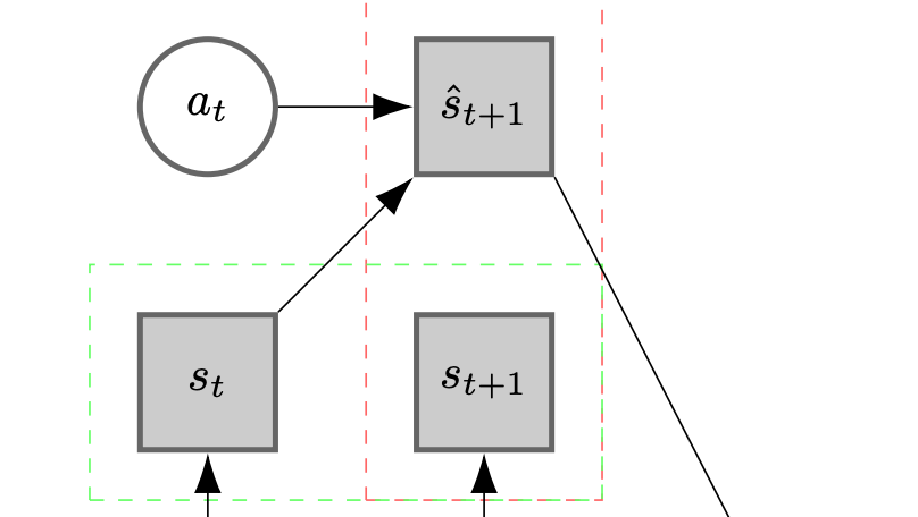

Natalia is Ass. Prof. of Artificial Intelligence at the Autonomous Systems and Robotics Lab (U2IS) at ENSTA ParisTech. She also belongs to the INRIA Flowers team on developmental robotics. Her research interests include deep, reinforcement and unsupervised learning, (state) representation learning, explainable AI and AI for social good. She is working on open-ended learning and continual/lifelong learning for applications in computer vision and robotics. Her background is on knowledge engineering (semantic web, ontologies and knowledge graphs) and is interested in neural-symbolic approaches to practical applications of AI.

Interns, PhD students and Postdocs wanted

On diverse topics: State representation learning, Deep and Reinforcement Learning, Explainable AI, and Computer Vision for Robotics/ autonomous systems/ vehicles. Interested? Send a single pdf with CV+grades.

News

Intelligent Drone Swarm for Search and Rescue Operations at Sea Paper accepted at the NIPS workshop on AI for Good, 2018.

I will be co-organizing the ECML PKDD 2019 Continual Learning Workshop.

Interests

- Artificial Intelligence

- Deep learning

- Reinforcement Learning

- Continual Learning

- Open-ended Learning

- Symbolic AI

- Explainable AI

- AI for Good

- Robotics

Education

-

Doctoral diploma on Innovation and Entrepreneurship , 2017

European Institute of Technology (EIT Digital) (Sweden, France, Finland)

-

Double PhD in Artificial Intelligence, 2015

Abo Akademi University, Turku (Finland) and University of Granada (Spain)

-

M Sc. Soft Computing and Intelligent Systems, 2012

University of Granada (Spain)

-

M Sc. Computer Engineering, 2010

University of Granada (Spain)